Co-written by Ankur Banerjee (CTO/co-founder), Ross Power (Product Manager), and Alex Tweeddale (Governance Lead)

Overview

As strong proponents for open source software, over the past month the cheqd Engineering & Product team has spent a lot of effort polishing and open-sourcing products we’ve been developing for decentralised identity and Cosmos SDK framework. Some of these tools are core to delivering our product roadmap vision, while others are tools we built for internal usage and believe will be valuable for developers outside of our company.

Most of the Cosmos SDK blockchain framework, as well as self-sovereign identity (SSI) code is built by community-led efforts where developers open source and make their code available for free. Or at least, that’s how it’s supposed to work. In practice, what quite often happens, unfortunately, is that very few companies or developers contribute code “upstream” or make them available to others leading to the “one random person in Nebraska” problem.

Source: xkcd #2437 “Dependency”

While the crypto markets have taken a hit, we believe there is no better time to get our heads down to build, innovate, and iterate on new products and tools.

Our intention is to enable others to benefit from our work. For each product or tool that we are releasing under an open source license (Apache 2.0), we explain what the unique value proposition is, and which audience could benefit from the work we have done.

Our work is largely split between:

- Core identity functionality which is integral to our product and work in the Self-Sovereign Identity ecosystem; and

- Helpful tooling, infrastructure and analytics packages for Cosmos SDK, which can be easily adopted by other Cosmos/IBC chain developers.

Core Identity Functionality

Core Identity Functionality

EASILY READ DECENTRALIZED IDENTIFIERS (DIDS) ON CHEQD NETWORK

EASILY READ DECENTRALIZED IDENTIFIERS (DIDS) ON CHEQD NETWORK

Github repositories: cheqd/did-resolver

We released the cheqd DID method in late 2021, creating a way for any person to write permanent and tamper-proof identifiers that act as the root of trust for issuing digital credentials. While the functionality to read/write Decentralized Identifiers (DIDs) has existed since the beginning of the network, such as when we published the first DID our network or ran an Easter egg hunt for our community with clues hidden in DIDs, we wanted to go further and provide a “resolver” or “reader” software that makes it easy for app developers to use such functionality themselves. This was one of our primary goals for the first half of 2022.

Having a DID resolver software is important since reading DIDs from a network happens disproportionately more often than writing DIDs to a network. Think about the act of getting a Twitter username or a domain name: you sign up and create the handle once, and then use it as many times as you want to send new tweets, or to publish new web pages on your domain. This is similar to writing a DID once, and using it to publish digitally verifiable credentials many times. The recipients of those credentials can use a DID resolver to read the keys written on-ledger used to verify that credentials issued are untampered.

Many decentralised digital identity projects use the Decentralized Identity Foundation (DIF) Universal Resolver project to carry out DID reads/resolution. Therefore, we have made a cheqd DID Resolver available under an open-source license, and we’re working with DIF to get this integrated upstream into the Universal Resolver project.

The DID resolver we’ve made available is a “full” profile resolver, which is written in Golang (same as our ledger/node software) and can do authenticated/verified data reads from any node on the cheqd network over its gRPC API endpoint. This will likely be required by app developers or partners looking at processing high volumes of DID resolution requests since they can pull the data from their own cheqd node, and/or if they want the highest levels of assurance that the data pulled was untampered.

We also plan on releasing a “light” profile DID Resolver, built as a tiny Node.js application designed to run on Cloudflare Workers (a serverless hosting platform with extremely quick “cold start” times). This will allow app developers who don’t want to run a full node + full DID Resolver to be able to run their own, extremely scalable, and lightweight infrastructure for servicing DID read requests.

In short, this architecture improves:

- Accessibility to cheqd DIDs

- Flexibility, offering app developers and partners optionality and choice of platforms to run on, according to their security/scalability needs, and at various different levels of how much it costs to run this infrastructure.

ISSUE AND VERIFY DIGITAL CREDENTIALS USING VERAMO CLIENT-APP SDK ON THE CHEQD NETWORK

ISSUE AND VERIFY DIGITAL CREDENTIALS USING VERAMO CLIENT-APP SDK ON THE CHEQD NETWORK

Github repositories: cheqd/did-provider-cheqd, cheqd/did-jwt-vc

We’ve been working hard following our identity wallet demo at Internet Identity Workshop in April 2022 to make this functionality available to every app developer. We’re excited to announce that you can now issue JSON / JWT-encoded Verifiable Credentials using a plugin for the cheqd network we built for Veramo.

Veramo is an existing open-source JavaScript software development kit (SDK) for Verifiable Credentials. We recognise that app developers and our SSI vendor partners have their own preferred SDKs and languages, based on credential formats. We chose to implement the JWT/JSON Verifiable Credentials (VCs) using Veramo since it has a highly-flexible and modular architecture. This allowed us to create a plugin to add support for the cheqd network, without having to rewrite a lot of code for basic DID and VC functionality from scratch.

You can try out a reference implementation of how you as an app developer can build your own applications using our Veramo SDK plugin and ledger on the cheqd wallet web app (more on this later).

We’re also working on supporting other popular credential formats, such as AnonCreds as we know there’s interest in this from many of our SSI vendors/partners.

Why is this valuable

cheqd’s objective is to provide its partners with a highly scalable, performant Layer 1 which can support payment rails and customisable commercial models for Verifiable Credentials. In order to showcase the baseline identity functionality, it was important to build tooling to issue and verify Credentials using an existing SDK. Veramo was a perfect SDK to build an initial plugin for cheqd, due to its already modular architecture.

This repository therefore showcases that cheqd has:

- Functional capability for signing Credentials using its DID method

- Functional capability for carrying out DID authentication to verify Credentials

- Ability for cheqd to plug into existing SDKs, which we hope to expand to other SDKs provided by our partners and the wider SSI community.

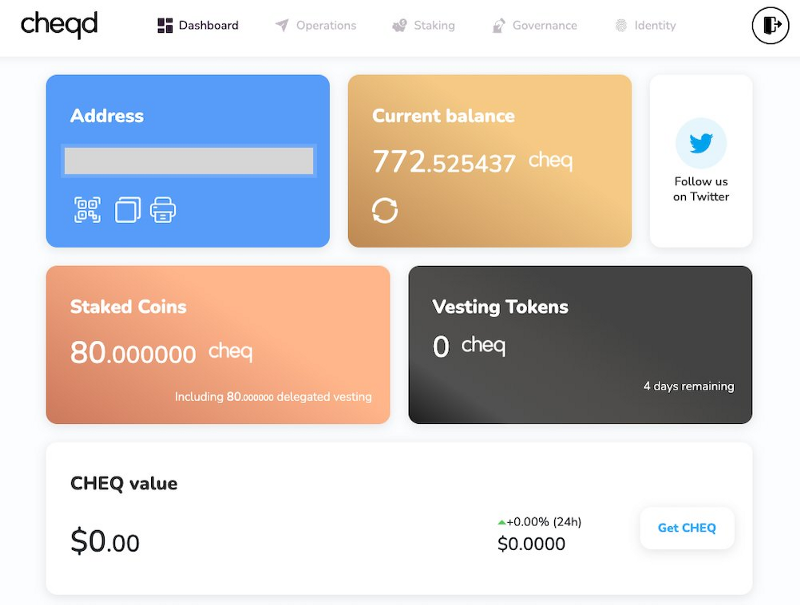

STAKE, DELEGATE AND HOLD A CREDENTIALS IN A FIRST-PARTY CHEQD WEB-APP WALLET FOR CHEQ TOKEN HOLDERS

STAKE, DELEGATE AND HOLD A CREDENTIALS IN A FIRST-PARTY CHEQD WEB-APP WALLET FOR CHEQ TOKEN HOLDERS

In April 2022, we demoed wallet.cheqd.io at Internet Identity Week (IIW34) in San Francisco to show a non-custodial wallet, that can be recovered, where users can stake, delegate, vote with CHEQ tokens AND hold W3C Verifiable Credentials.

To build the demo wallet, we forked the Lum wallet, an existing Cosmos project. By adding new identity features to an already great foundation, we’ve been able to speed up our journey to get a Verifiable Credential in a web-based wallet.

Whilst we’re expanding this we’ve OpenSourced the cheqd-wallet repo to enable our partners, other SSI vendors and interested developers to:

- View and test out the functionality in their own environments, and

- To build-on, extend and replicate the wallet’s utility into their own software and wallets.

In the coming week’s we’ll be launching some exciting features which will really bring this wallet to life for the @cheqd_io community and beyond… so watch this space!

Try out the @cheqd_io demo yourself at wallet.cheqd.io, get a VerifiableCredential, and read more about the background on how we built it in our CTO Ankur Banerjee’s TweetStorm.

Why is this valuable?

So far, this wallet has been used for demo purposes, however, moving forward we would love to showcase the real value of Verifiable Credentials by issuing our community their own VCs for different reasons. These could all be stored, verified and backed up using the cheqd wallet. Through demonstrating our technology in a wallet as such, it makes it easier for new community members and partners to visualise and understand the value of everything we’re building on the identity front.

Oven-ready tooling, infrastructure and analytics packages

Oven-ready tooling, infrastructure and analytics packages

STREAMLINING NODE SETUP AND MANAGEMENT WITH INFRASTRUCTURE-AS-CODE

STREAMLINING NODE SETUP AND MANAGEMENT WITH INFRASTRUCTURE-AS-CODE

Github repositories: cheqd/infra

Over the past months we’ve been implementing various tools to improve performance, speed up node setup and help to reduce manual effort for our team and external developers as much as possible. We wanted to make installing and running cheqd nodes easy. Therefore, our automation allows people to configure secure, out-of-the-box configurations efficiently and at a low cost.

Terraform: Infra-as-code

We have started using HashiCorp’s Terraform to define consistent and automated workflows — in order to improve efficiency and streamline the process of setting up a node on cheqd. Terraform is a form of Infra-as-code which is essentially the managing and provisioning of infrastructure through code instead of through manual processes. You can think of it like dominos — one click of a button can result in a whole series of outcomes.

This automation gives prospective network Validators the choice of whether they want to just install a validator node (using our install instructions), or whether they want to set up a sentry+validator architecture for more security.

Terragrunt: Infra-as-code

Terragrunt works hand-in-hand with Terraform, making code more modular, reducing repetition and facilitating different configurations of code for different use cases. You can plug in config information like CPU, RAM, Static IPs, Storage, etc., which speed things up whilst making the code more modular and reusable.

Through the use of Terragrunt, we are also able to extend our infrastructure to a full suite of supported cloud providers. This is important since our infrastructure code only works directly with Hetzner and DigitalOcean cloud providers (for their good balance of cost vs performance). We did, however, recognise that many people use AWS or Azure. Terragrunt therefore, performs the role of a wrapper to make our infrastructure available in Hetzner and DigitalOcean, as well as making it easier to utilise with AWS or Azure.

Ansible: Infra-as-code

Ansible allows node operators to update software on their nodes, carry out configuration changes etc, during the first install and subsequent maintenance. In a similar way to Terragrunt, Ansible code can also act as a wrapper, converting the code established via Terragrunt and Terraform into more cross-compatible formats.

Using Ansible, the same configurations created for setting up nodes on cheqd could be packaged in a format which could be consumed by other Cosmos networks. Therefore, this could have a knock-on effect for benefiting the entire Cosmos ecosystem for running sentry+validator infrastructure.

DataDog: Monitoring

DataDog is a tool that provides monitoring of servers, databases, tools, and services, through a SaaS-based data analytics platform. You can think of it like a task manager on your laptop. Using DataDog we keep an eye on metrics from Tendermint (e.g. if a validator double signs a transaction) and the Cosmos SDK (e.g. transactions / day).

This is valuable to ensure the network runs smoothly & any security vulnerabilities/issues that may impact consensus are quickly resolved.

Cloudflare Teams: Role Management (SSH)

When managing a network it’s important that those building it can gain access when they need it. For this we’ve been using Cloudflare Teams to SSH into one of our nodes.

SSH (Secure Shell) is a communication protocol that enables two computers to communicate by providing password or public-key based authentication and encrypted connections between two network endpoints

This work is important because other Cosmos networks can reuse the role management package to reduce the time spent on configuring their own role management processes for SSH.

HashiCorp Vault: Secret Sharing

Sharing secrets in a secure fashion is vital — for this we’ve HashiCorp Vault which offers a script that copies private keys and node keys over to a vault. You can think of this like a LastPass or 1Password for network secrets (e.g. private keys). This way, if for example a node is accidentally deleted and the private key is deleted for a validator, it’s easy to restore it.

This is hugely valuable for Validator nodes, who may want to add an extra layer of security to the process of backing up private keys and sharing keys between persons internally. Moreover, through using HashiCorp Vault, we hope to reduce the amount of risk teams may incur of losing their private keys and thus, losing the ability to properly manage their nodes.

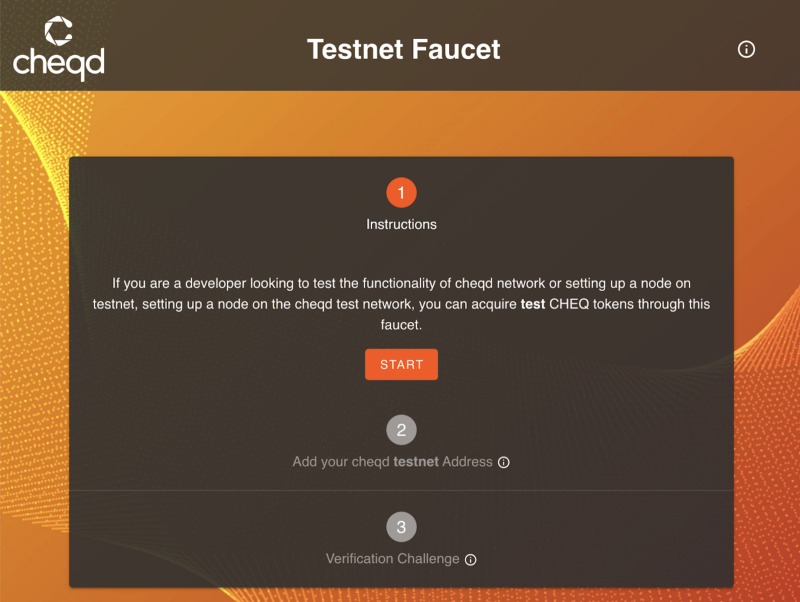

AUTOMATED DISTRIBUTION OF CHEQ TEST TOKENS WITH OUR TESTNET FAUCET

AUTOMATED DISTRIBUTION OF CHEQ TEST TOKENS WITH OUR TESTNET FAUCET

Github repository: cheqd/faucet-ui

The cheqd testnet faucet is a self-serve site that allows app developers and node operators who want to try out our identity functionality or node operations to request test CHEQ tokens, without having to spend money to acquire “real” CHEQ tokens on mainnet.

We built this using Cloudflare Pages as it provides a fast way to create serverless applications which are able to scale up and down dynamically depending on traffic, especially for something such as a testnet faucet which may not receive consistent levels of traffic. The backend for this faucet works using an existing CosmJS faucet app to handle requests, run using a Digital Ocean app wrapped in a Dockerfile.

WHY IS THIS VALUABLE?

This solution:

- Helps to keep the team focused on building, as no longer do we need to dedicate time for manually responding to requests for tokens.

- Creates a far more cost effective way of handling tesnet token distributions

- Can be utilised by developers to test cheqd functionality far more efficiently

- Can be used by other Cosmos projects to reduce operational overheads and reduce headaches around distributing testnet tokens

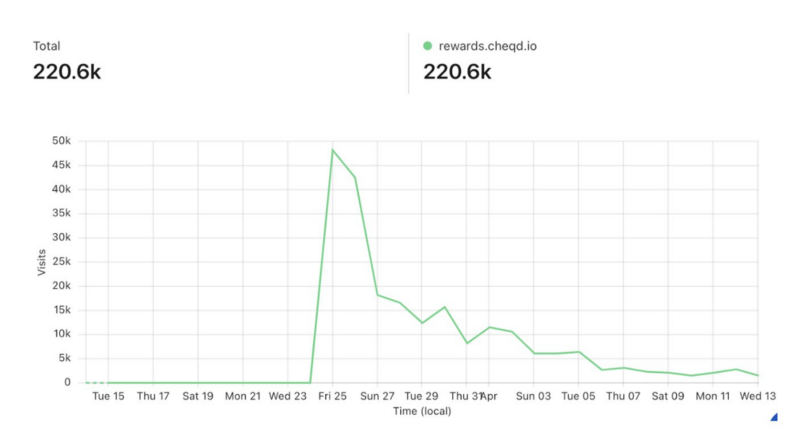

FRONTEND/BACKEND FOR RUNNING COSMOS SDK AIRDROPS

FRONTEND/BACKEND FOR RUNNING COSMOS SDK AIRDROPS

Github repository: cheqd/airdrop-ui (FE), cheqd/airdrop-distribution(BE)

The airdrop tools, used for our community airdrop rewards site, are split into two repos; one for managing the actual distribution of airdrop rewards to wallets, and another for the frontend itself to handle claims.

In terms of the frontend, we learnt that airdrop reward sites need to be more resilient to traffic spikes than most websites because, when announced, community members will tend to flock to the site to claim their rewards generating a large spike in traffic, followed by a period of much lower traffic.

This type of traffic pattern can make prepping the server to host airdrop claim websites particularly difficult. For example, many projects will choose to purchase a large server capacity to prevent server lag, whilst others may simply become overwhelmed with the traffic.

To manage this, the frontend site was developed to work with Cloudflare Workers, a serverless and highly-scalable platform so that the airdrop reward site could handle these spikes in demand.

On the backend we also needed to build something that could manage a surge in demand whilst providing a highly scalable and fast way of completing mass distributions. Initially our implementation struggled with the number of claims resulting in an excessive wait to receive rewards in the claimant’s wallet. To improve this we used 2 separate CosmJS-based Cloudflare Workers scripts; one which lined up claims in 3 separate queues (or more if we wanted to scale further), and a second distributor script that is instantiated dependent on the number of queues (i.e. 3 queues would require 3 distribution workers).

There is no hiding that we ran into some hiccups, in part due to our Cloudflare Worker approach, during our Cosmos Community Mission 2 Airdrop. We have documented all of the issues we ran into during our airdrop and the lessons learnt in our airdrop takeaway blog post. What is important to explain is that:

- The reward site using Cloudflare Workers scaled very well in practice, with no hiccups;

- We had problems with the way we collated data, but the fundamental Cloudflare Workers infrastructure we ended up with, after having to refactor for our initial mistakes, is battle tested, highly efficient and resilient.

WHY IS THIS VALUABLE?

Any project using the CosmosSDK and looking to carry out an airdrop or community rewards program can now use our Open Sourced frontend UI and Distribution repository to ensure a smooth and efficient process for the community, without any hiccups in the server capacity or distribution mechanics.

We would much rather other projects do not make the same mistakes as we did when we initially started our airdrop process. What we have come away with, in terms of infrastructure and lessons learned, should be an example of the do’s and the not-to-do’s when carrying out a Cosmos based airdrop.

USEFUL COSMOS DATA API’S FOR DEVELOPERS AND PRODUCT MANAGERS

USEFUL COSMOS DATA API’S FOR DEVELOPERS AND PRODUCT MANAGERS

Github repositories: cheqd/data-api

We found on our journey that there’s a LOT of stuff that we needed APIs for, but couldn’t directly fetch from base Cosmos SDK’s.

As Cosmonauts are well aware of, the CosmosSDK offers APIs for built-in modules using gRPC, REST & Tendermint RPC, however, we noticed a few that it can’t provide, so we built them:

- Total Supply

- Circulating Supply

- Vesting Account Balance

- Liquid Account Balance

- Total Account Balance

This collection of custom APIs can be deployed as a Cloudflare Worker or compatible serverless platforms.

Further specifics about what these APIs mean can be found within our repository Readme.

Why is this valuable

These APIs are useful for multiple reasons:

- Applying for listings on exchanges requires many of these APIs upfront

- Auditing and analysing the health of a network

- Creating forecasts and projections based on network usage

- Providing transparency of metrics to the network’s community

Through open sourcing these APIs, we want to provide an easy way for all other Cosmos projects to track these metrics, hugely reducing the time and energy needed to source these metrics from scratch.

COSMOS CROSS CHAIN ADDRESS CONVERTOR CLI

COSMOS CROSS CHAIN ADDRESS CONVERTOR CLI

Github repositories: cheqd/cosmjs-cli-converter

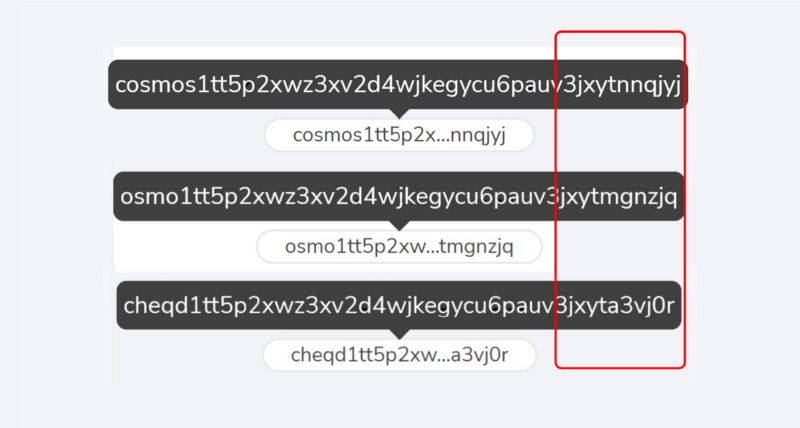

There is an assumption in the CosmosEcosystem that wallet addresses across different chains, such as, Cosmos (ATOM), Osmosis (OSMO) and cheqd (CHEQ), are all identical. This is because they all look very similar. However, each chain’s wallet address is actually unique.

Interestingly, each network’s wallet address can be derived using a common derivation path from the Cosmos Hub wallet address. Using one derivation path #BIP44 means that users that use one secret recovery phrase and core account to interact with multiple networks.

Our cross-chain address convertor is able to automate the derivation of any chain address from one Cosmos address to another. We’ve seen some examples of this previously, but they are mostly designed to do one-off conversions in a browser rather than large-scale batch conversions. Emphatically, our converter could do 200k+ addresses in a few minutes. Doing this using any existing CLI tools or shell scripts can take hours.

Why is this valuable

This is valuable since it can automate airdrops or distributions to any account, just from a Cosmos Hub address in bulk, making data calculations far more efficient.

For new chains in the Cosmos Ecosystem, this makes it much easier for the core team and Cosmonauts to discover and utilise their account addresses and carry out distributions.

Conclusion

Phew! There’s a lot here but we really want to make sure everything we do for cheqd is useful far beyond our project. Contributing back to the Web3 and SSI community is a shared belief across the cheqd team. It as one of our foundational principles.

As always, we’d love to hear your thoughts on our writing and what this means for your company. Feel free to contact the product team directly — [email protected], or alternatively start a thread in our Discord.